AI Driven Code Reviews - Lessons

With AI writing most of our code, we reviewers have now become bottlenecks.

Our Team has recently been exploring & experimenting with AI driven code reviews. This post is about how we’ve structured the documents and our learnings so far.

Summary (TLDR)

- Create two documents: a

code-review-checklist.mdwhich contains your code review checklist and acode-review.mdfile with instructions & rules to the agentic reviewer. - Assign weights to the code review checklist items to ensure a more deterministic outcome

- Add open-ended questions based on coding principles that the agent can ask about the code to catch issues you’ve not thought of yourself

- Manage the context

- Avoid depending completely on AI: add automation

- Try & make it a learning experience for the developers

- As with all AI prompts, start small & iterate

Details

Documents

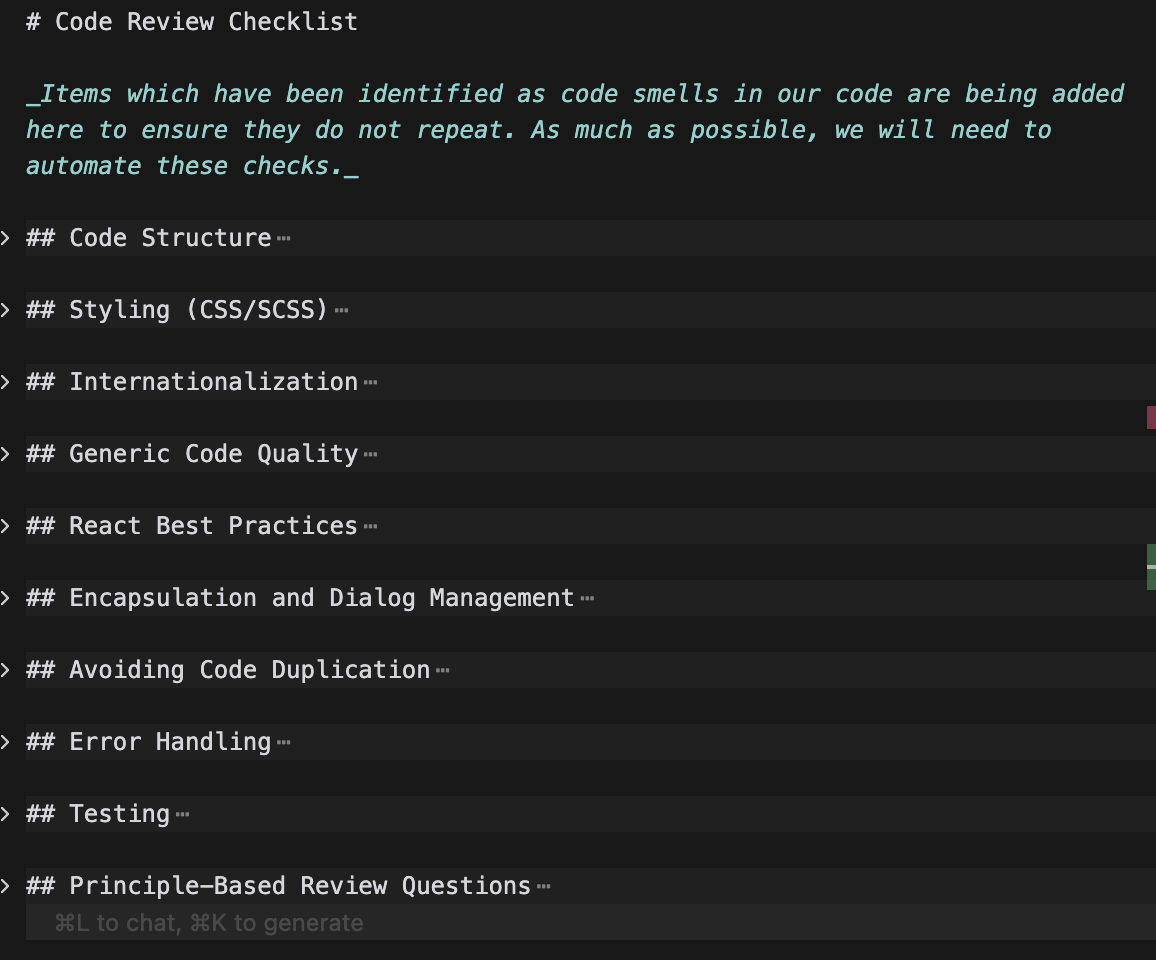

We have created a few documents to help developers & the AI agent do code reviews.

- A

code-review-checklist.mdcontains actual instructions on important criteria to consider when doing a code review. This document is meant as a guide to reviewers & to developers & is regularly updated after manual code reviews with the design & code smells we find.

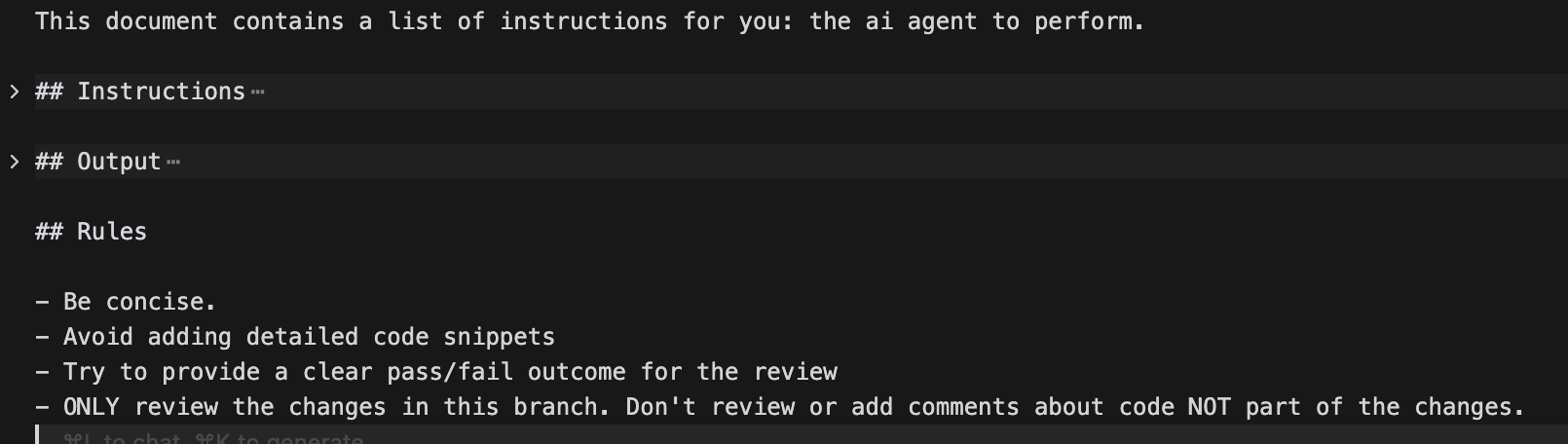

- A second file:

code-review.mdis meant purely as instructions to the AI agent.

This document contains the sections:

Instructions

These are instructions to the agent on HOW to do the review. They break down the task into multiple steps which tell the Agent

- how to identify the relevant code

- what artefacts to create as an intermediate step (useful later in the review or if the review is repeated)

- where to look for additional information or references.

- what output to create. This ensures that all code reviews result in a consistent output

Example instruction

- Action: Switch to “Plan mode”

- Assume that the target branch is

main- Action: Understand the changes done in this branch.

- Compare the list of files modified in this branch compared to the target branch.

- For EACH modified file:

- Run

git fetch origin && git diff origin/<target-branch>...<current-branch> -- <file>to see exact changes- Identify ALL new functionality (ex: new props, new methods, new behavior, new UI elements)

- Document what changed vs the target Branch

- Check if corresponding test file exists and covers the changes

- Action: Review each commit & look for code-smells. Use

code-review-checklist.mdas reference- Create a file under the

logsfolder (create the folder if necessary) ascode_review_comments.mdwith the findings & recommendations

Rules

These are rules on how to do the review. They are necessary to ensure the AI agent stays within the guard rails, tokens are not wasted by verboseness & context stays small. Example:

Example rules

- Provide a clear pass/fail outcome for the review

- ONLY review the changes in this branch. Don’t review or add comments about code NOT part of the changes.

Learnings

Add Weights

The outcomes of agentic code reviews were sometimes flaky. Since the agent wasn’t told what was important, sometimes code reviews would fail for trivial issues & at others pass when there were serious violations. To fix this, we added weights to the sections in the code-review-checklist.md.

Example rules

[MEDIUM] - Review all props and ensure they are necessary.

- WHY: Unnecessary props increase API surface and maintenance burden

[HIGH] - Reusable components and code should not contain context-specific conditional logic that changes behavior based on view/context type.

- Reusable components should have consistent behavior regardless of where they’re used

If a component needs to behave differently in different contexts, the caller should handle the context-specific logic (e.g., filtering, transforming data) before passing props to the component

- WHY: Context-specific logic in reusable components reduces reusability, increases complexity, and violates the Single Responsibility Principle. Callers should prepare data/props appropriately for the component’s API.

More rules were then added to the code-review.md file.

Example rule

- CRITICAL: The review MUST FAIL if ANY of the [HIGH] priority items in the

code-review-checklist.mdfile are violated.

This rule ensures that the reviews are deterministic (or at least more so than before).

Example rule

- [MEDIUM] priority violations should be flagged for developer review. The developer should evaluate each MEDIUM violation and make a decision on whether to address it or document why it’s acceptable in the current context. The review should not automatically fail for MEDIUM violations, but they should be clearly documented for consideration.

This rule was an attempt to try & have the agentic review be a learning experience to junior developers. They are encouraged to explore IF they should incorporate the medium comments & hopefully in the process of this thought exercise, learn a little more than they did before.

Add open ended questions

While the agent was now more deterministic & reliably identified issues we’d documented in the code-review-checklist, we realised we weren’t really harnessing its powers well. To ensure the agent caught issues we’d not explicitly discovered or documented ourselves, we added a section Principle-based Review. This section contains principles which the agent uses as questions to ask itself about the changed code.

Example rule

[HIGH] - Single Responsibility Principle (SRP): Does this change add multiple responsibilities to a component, function, or module?

- Ask: “Is this component/function doing more than one thing? Could responsibilities be separated?”

- WHY: Components with single responsibilities are easier to understand, test, and maintain

[HIGH] - Reusability: Does this change make reusable code context-specific or less reusable?

- Ask: “If this is reusable code, does it now depend on specific contexts or views? Should the caller handle context-specific logic instead?”

- WHY: Reusable components should work consistently across contexts. Context-specific logic belongs in callers.

Manage context intelligently

To ensure AI context is managed intelligently, we’ve tried the following:

Rules which force the agent to be concise & avoid verbose examples etc were added

Example rule

- Be concise.

- Avoid adding detailed code snippets

All code review comments are evaluated by developers through the question: “Could this have been found via automation?”. If the answer is yes, a library, static analysis tool or custom script is written to automate the check. (The scripts-orchestrator has been very useful here in ensuring several different scripts can be run in parallel & in isolation). Once a review comment has been automated, it is moved to a new file automated-checks.md which information similar to the code-review-checklist but is more for documentation. This also ensures that the AI agent’s context is not used for such comments.

Example of an automated rule

[LOW] - Arbitrary Delays in Tests: Use waitForSelector with timeout instead of delay() calls in tests

- WHY: Arbitrary delays make tests flaky and slow

🤖 Automated: Checked by ESLint rule

no-arbitrary-delays-in-tests

Instructions were tweaked to ensure that if the agentic review is repeated, it can benefit from earlier runs & does not need to rebuild the context it needs or start from

Example instruction

- Action: Before starting the review, check if

logs/code_review_comments.mdalready exists. If it does, read it and treat it as context for the code review. The user may be running the command a second time, and the existing review should inform the current review.

Iterate

Developers have been asked to attach the markdown created by the agentic reviewer to the pull request so the effectivness reviewer itself can be evaluated. This has helped us identify gaps in the agentic review & iteratively improve it.