AI Driven Code Reviews - Optimisation

We’ve been using AI for our code reviews for a couple of months now and we are seeing a definite improvement in the quality of code.

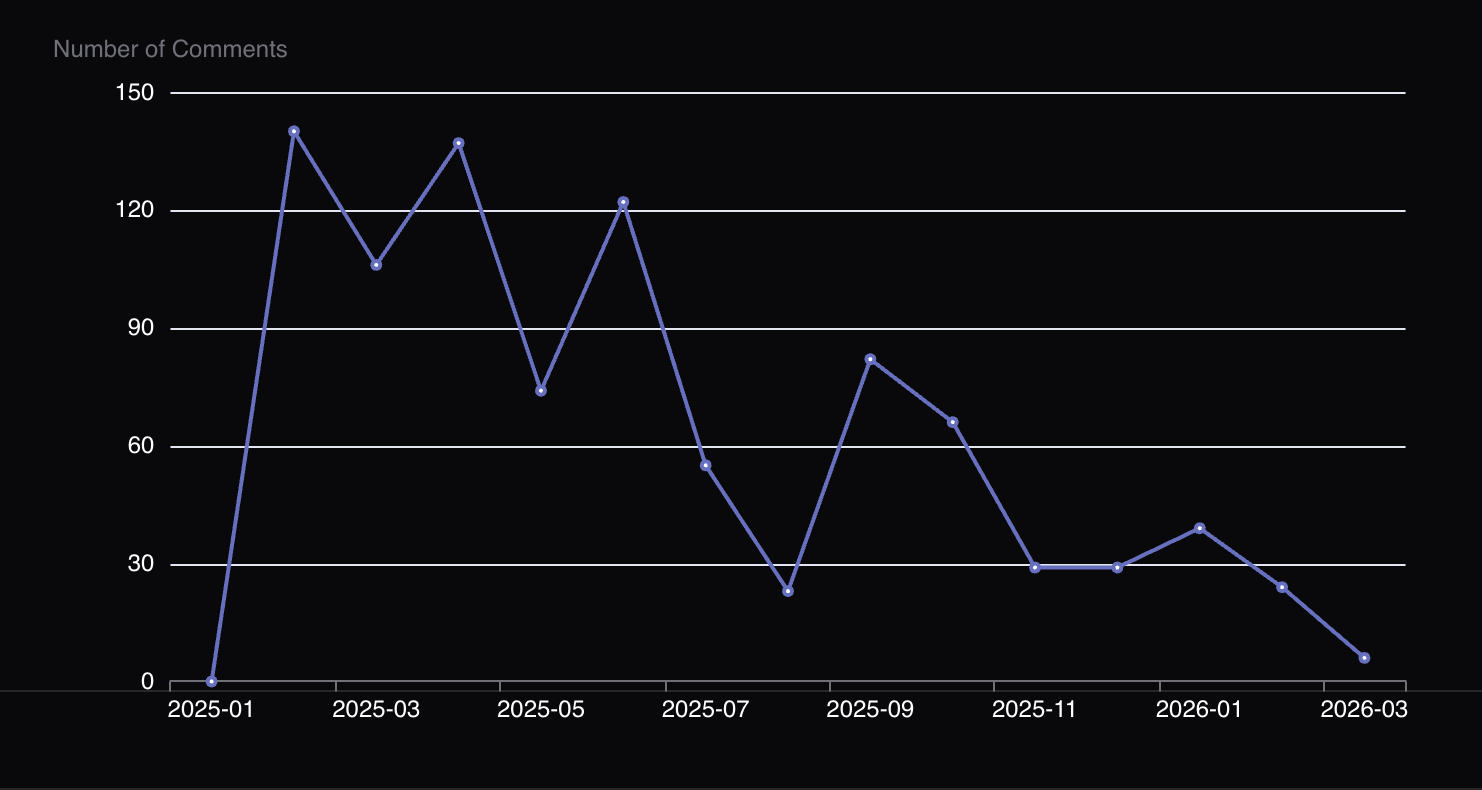

Average number of comments per review

Since the agent is run as a gate before a pull request is created, the number of comments on PRs has steadily decreased.

Note: There is another contributor to this trend. These days, I incorporate more changes myself rather than ask the developer to change. The flip side of this is that by doing the changes myself, I’m depriving developers of the opportunity to learn.

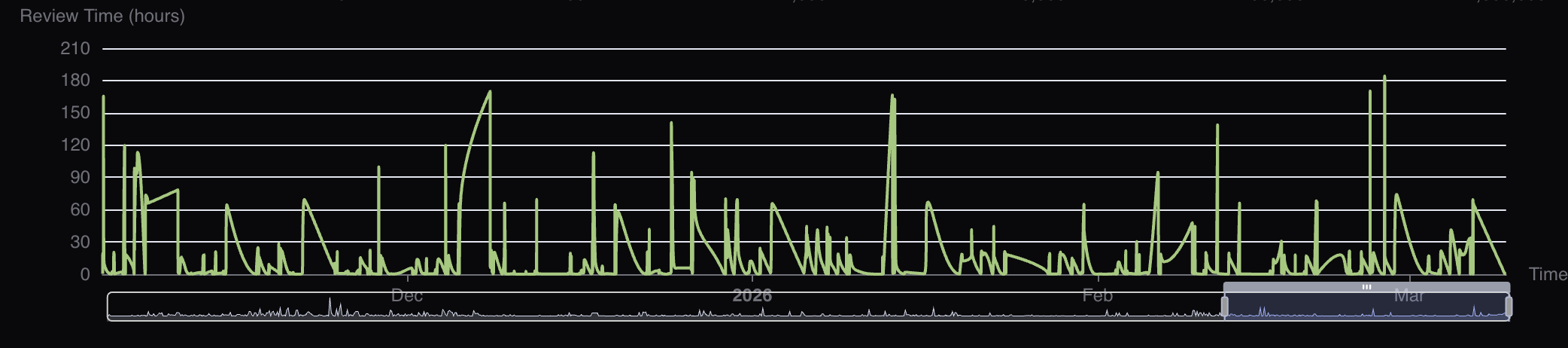

Average review time

You would think that the average review time would come down but that is NOT the case. If anything, I spend the same amount of time reviewing as before. It is only that I look for deeper patterns now. Also, due to the fact that I don’t fully trust AI yet - ensuring the review is effective remains my responsibility so though I find fewer issues, I still need to review much the same as before.

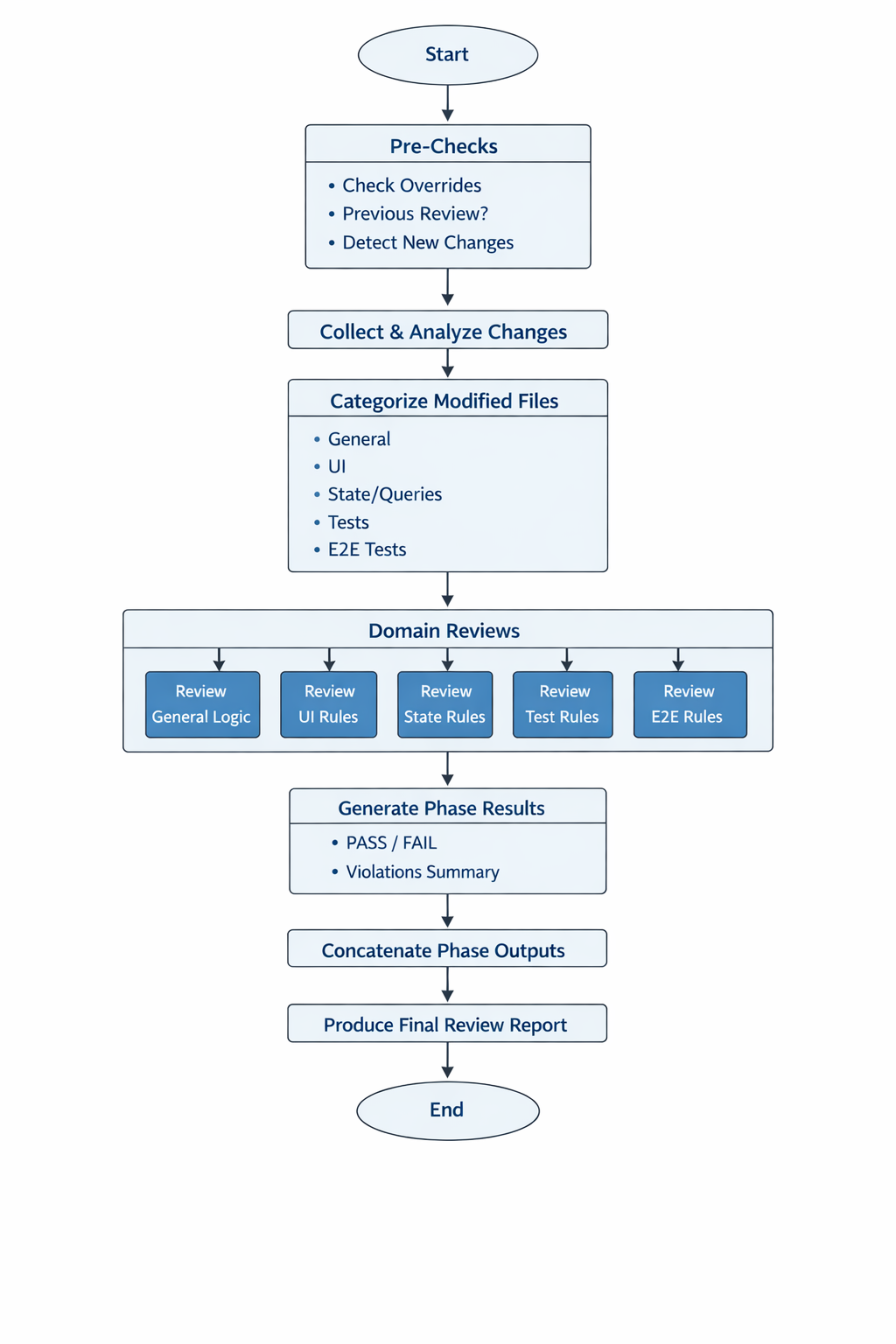

AI reviewer optimisation

While the quality of the reviews has steadily increased, we have also made changes to try & optimise the process. For instance, by trying to conserve token usage.

So far, we have

- Divided the review into several different phases

- Each phase has its own context & output

- Given clear instructions to the AI on what commands to run (to reduce the need for it to think or interpret instructions)

- The AI is told to keep final outcome intentionally terse & to create it by concatenating (again using commands) the output of the previous phases.

Next Steps

I plan to add metrics around token usage. Claude, for instance, makes it easy to track token usage per-conversation.

Bonus: RCAs

I’ve also been exploring having the agent do some part of RCAs using a prompt like the one below:

See commit

xxxx- this commit fixes the issue <describe issue>.

Could this issue have been caught by automation?

Identify a new standard eslint rule or a new custom eslint rule or a new code review checklist (see docs/process/code-review-ui-checklist.md) or new test review checklist (see docs/testing/tests-review-checklist.md) OR all of these which would have helped.

Earlier posts on this topic can be found here. The charts were generated using the github project visualiser